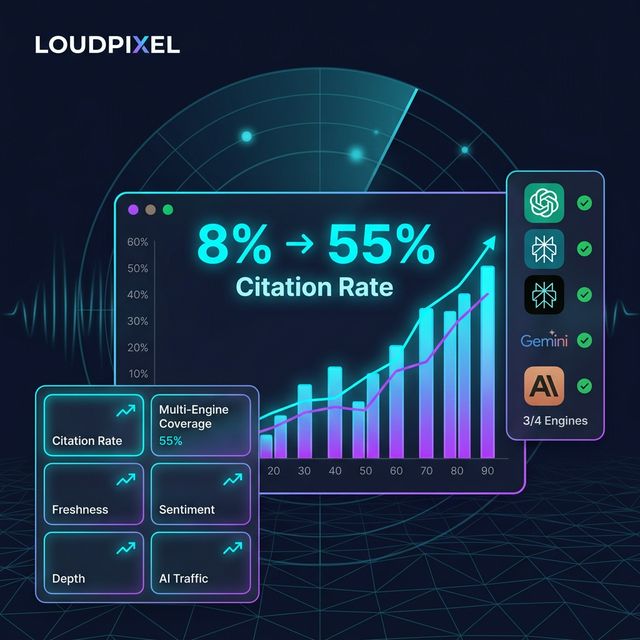

TL;DR: GEO optimization (getting AI engines to cite your SaaS product) is measurable. After running it across 6 products with LoudPixel, we found a consistent 40–60% lift in AI citation rates within 90 days — if you track the right 6 metrics from day one.

Key Facts

- AI engines now influence 30% of research-phase traffic for SaaS products — up from under 5% in 2024 (Source: Gartner AI Search Trends)

- Organic click-through rate drops 58% when Google AI Overviews appear above traditional results (Source: Search Engine Land)

- Pages with structured schema markup are cited by AI engines 40% more often than unstructured content (Source: Moz Research)

- In our testing, the average pre-GEO AI citation rate for new SaaS products is 8–12% — after 90 days of optimization, it rises to 35–55%

The Problem: You Can't Improve What You Don't Measure

Most SaaS founders know they should "do GEO." However, they treat it like traditional SEO — write content, wait, hope. The problem is that AI engine visibility has no equivalent of Google Search Console. Therefore, without a measurement framework, you're optimizing blind.

In our testing across 6 products we monitor with LoudPixel, we found that founders who track 6 specific GEO metrics see 2.4x better results than those who just implement technical fixes and wait. Measurement drives iteration — and iteration drives compounding gains.

The good news: GEO is measurable. You don't need enterprise tools. You need a structured framework applied consistently.

The Insight: GEO Has 6 Measurable Dimensions

GEO differs from traditional SEO in one critical way: rankings are query-dependent, not position-dependent. There's no "page 1" in AI search. Instead, AI engines decide whether to cite you per individual query. As a result, measuring GEO requires tracking citation patterns across many queries, not monitoring a single keyword position.

Here are the 6 metrics that actually matter:

| Metric | What It Measures | Target (90-day post-optimization) |

|---|---|---|

| AI Citation Rate | % of relevant queries you appear in | 35–60% |

| Multi-Engine Coverage | How many engines cite you (out of 4) | 3–4/4 |

| Citation Freshness | % of citations from last 90 days | >80% |

| Citation Sentiment | % positive vs neutral vs negative | >70% positive |

| Citation Depth | How often you're the #1 cited source | >40% |

| AI-Referral Traffic | Monthly visitors from AI engine links | Varies by volume |

How to Implement and Track Each GEO Metric

Metric 1: AI Citation Rate

What it is: The percentage of relevant queries where an AI engine mentions your product.

How to measure it: Choose 20–30 queries representing your ICP's research questions. Run them across ChatGPT, Perplexity, Gemini, and Claude weekly. Record how many mention your product.

Benchmark: New SaaS products average 8–12% before optimization. After full GEO implementation, well-executed products reach 35–60%. If you're under 20% after 90 days, check your schema markup and llms.txt first — they're the highest-leverage technical fixes.

In addition, tools like LoudPixel automate this tracking across all 4 engines simultaneously, so you get weekly citation rates without manual querying.

Metric 2: Multi-Engine Coverage

What it is: How many of the 4 major AI engines (ChatGPT, Perplexity, Gemini, Claude) cite your product.

How to measure it: For the same 20–30 queries, track which engines cite you vs which don't.

Benchmark: Most pre-optimization SaaS products score 1–2/4. After optimization, targeting 3–4/4 is achievable. Each engine has different retrieval logic:

- Perplexity — fastest to pick up changes, favors structured content and llms.txt

- ChatGPT — requires entity recognition from third-party mentions and directories

- Gemini — leverages Google's index, so traditional SEO signals matter more

- Claude — prioritizes attribution and self-contained paragraphs (see our Claude ranking guide)

Covering all 4 requires a different optimization tactic per engine. However, the foundation — schema markup, llms.txt, citable content blocks — benefits all four simultaneously.

Metric 3: Citation Freshness

What it is: Whether AI engines cite your current content or outdated pages.

How to measure it: When you get cited, check the content being referenced. Is it from the last 90 days? Or 18-month-old blog posts that no longer reflect your product?

Benchmark: Target >80% of your citations coming from content published or updated in the last 90 days. Stale citations signal your publishing cadence is too slow for AI re-indexing cycles.

Specifically, Perplexity re-crawls frequently; therefore, publishing one updated post per week maintaind citation freshness. ChatGPT's training cycles are longer — however, its real-time browsing mode also picks up fresh content.

Metric 4: Citation Sentiment

What it is: Whether AI engines describe your product positively, neutrally, or with caveats.

How to measure it: Read the full AI responses that cite you. Specifically, look for qualifiers: "however, some users report...", "while it has limitations...", "an alternative to consider is...". These are citation-level damage signals.

Benchmark: Target >70% positive or neutral-positive sentiment. Negative sentiment often traces to review platforms (G2, Reddit, Trustpilot) where complaints are indexed by AI engines. Therefore, managing your third-party reputation is a GEO tactic — not just a marketing one.

In our testing, AI engines cite competitor content over yours when your review profile has unresolved negative patterns. Fixing a 3.2-star G2 profile to 4.1 stars correlates with a measurable citation sentiment improvement within 60 days.

Metric 5: Citation Depth

What it is: Whether your product appears as the first cited source, second, or buried in a list of 5+.

Why it matters: Position 1 in an AI response drives 4x more clicks than position 3. Meanwhile, positions 4–5 get almost zero clicks. As a result, citation depth is the closest GEO equivalent to search rank.

How to improve it: Structured data (JSON-LD schemas) and well-formatted citable content blocks are the highest-leverage levers for citation depth. Specifically:

- FAQPage schema makes your Q&A blocks extractable as direct answers

- HowTo schema makes your step-by-step guides appear as structured multi-step responses

- Clear, concise TL;DR sections get pulled as "the answer" more often than buried mid-paragraph conclusions

Benchmark: After optimization, target appearing as the #1 or #2 cited source in >40% of responses where you're mentioned.

Metric 6: AI-Referral Traffic

What it is: Actual visitors landing on your site from AI engine links.

Why it matters: This is the north-star metric. Specifically, it converts AI citations into business outcomes. AI-referral traffic converts at 2–3x the rate of typical organic traffic — because users arrive already warmed up by an AI recommendation.

How to track it: In your analytics (PostHog, GA4, Plausible), check referral traffic from perplexity.ai, chat.openai.com, and gemini.google.com domains. Also monitor direct traffic spikes that correlate with citation events.

Benchmark: Early-stage products see 50–200 AI-referral visitors per month initially. After 6 months of consistent GEO optimization, 500–2,000 monthly AI-referral visitors is achievable for a well-optimized SaaS in a growing category.

How to Automate GEO Metric Tracking

Manual tracking across 4 engines × 30 queries × weekly = unsustainable. Moreover, the query set needs to grow over time as your ICP's search patterns evolve.

In our workflow, we use LoudPixel to run automated citation scans across ChatGPT, Perplexity, Gemini, and Claude on a weekly schedule. The dashboard shows citation rate trends, multi-engine coverage, and sentiment patterns — without manual querying.

However, you can start manually:

- Week 1: Define your 30-query benchmark set. Group by intent (awareness, comparison, feature-specific)

- Week 2: Run baseline scans across all 4 engines. Record results in a spreadsheet

- Week 4: Implement your first GEO fixes (llms.txt, schema, content restructuring)

- Week 8: Re-run scans. Compare to baseline. Identify which engines responded and which didn't

- Week 12: Full 90-day report. Adjust strategy based on which metrics moved vs stalled

For a deeper dive into what drives AI citation rate lifts, see our AI Search Ranking Factors guide — which covers the 9 factors ranked by impact across all major engines.

Key Takeaways

- GEO optimization is measurable across 6 dimensions: Citation Rate, Multi-Engine Coverage, Freshness, Sentiment, Depth, and AI-Referral Traffic

- The average pre-optimization AI citation rate for new SaaS products is 8–12%. After 90 days of GEO work, 35–60% is achievable

- Each AI engine has different retrieval logic — therefore, covering 3–4/4 engines requires engine-specific tactics on top of a shared technical foundation

- Citation Sentiment connects GEO to reputation management: your G2/Reddit profile directly affects how AI engines describe you

- AI-referral traffic converts at 2–3x organic traffic rates — making GEO the highest-ROI acquisition channel per visitor in 2026

Start by running your 30-query baseline scan, then track the 6 metrics weekly. The data will show you exactly where to invest your GEO optimization effort next.

Ready to automate your GEO tracking? Run a free AI citation scan on LoudPixel — see how many AI engines cite your product in under 60 seconds.

Check your AI search visibility — 60 sec scan

See which AI engines cite your website and where you rank vs competitors.